Notarizing Container images using JFrog’s API

Trust is a journey and not a state that lasts forever. Many customers we speak with store artifacts and container images in their private registry to keep them secure. The truth is that it is not only about vulnerabilities, but many other attributes, like licenses, existing contracts, or new versions.

Therefore, the trust level can change from one moment to the other. Codenotary allows for keeping the right trust level up to date by notarizing artifacts or container images with a different trust level (Trusted, Untrusted, or Unsupported). That happens without touching the file or container and does not require any redistribution.

This blog post covers a typical combination of JFrog Artifactory and Codenotary Community Attestation Service. The commercial offering Codenotary Cloud works similarly and has a richer feature set when it comes to user management, SBOMs, and vulnerability scanning.

Notarizing software artifacts shouldn’t be cumbersome. Ideally, it should be integrated into the DevOps processes and happen mostly in the background. But what are the best initial steps to set it up? A good starting point for implementing software artifact notarization is the Artifactory. A logical first step would be to notarize all images and/or artifacts that are already there. This blog looks into automated container image notarization using JFrog’s REST API and the Community Attestation Service from Codenotary.

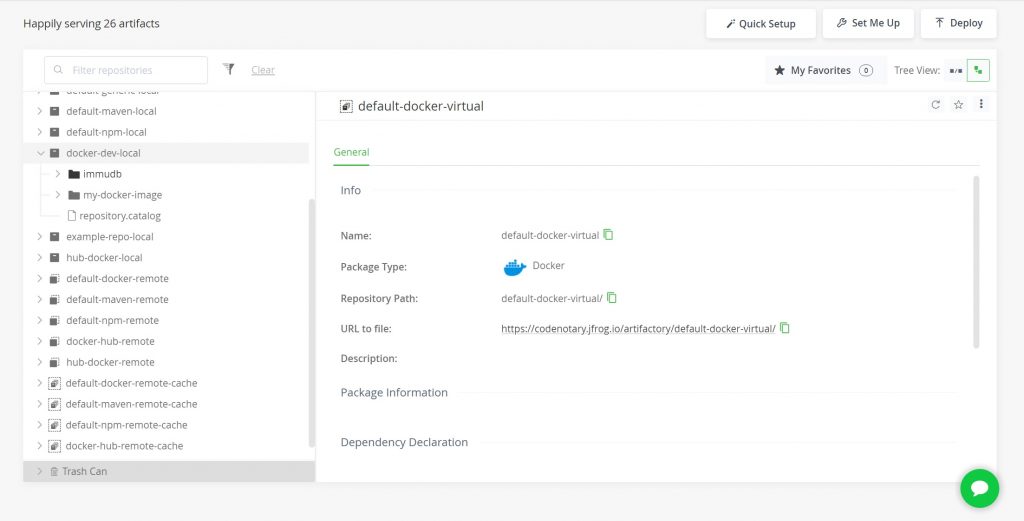

Setting up a Docker Repository in JFrog

JFrog is either available as self hosted trial version or for free in the cloud. Another prerequisite is Docker. Login into JFrog and create 3 repositories:

| Repository-Key | Type |

| docker-dev-local | local |

| docker-dev-remote | remote |

| docker | virtual |

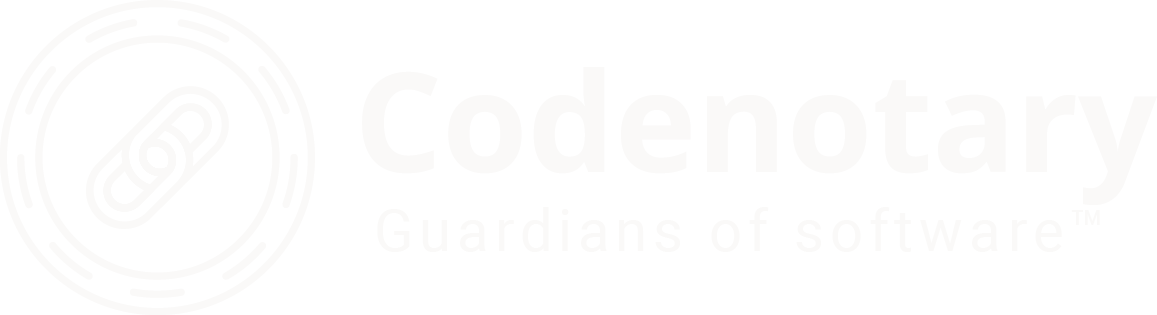

The local repository can be created with standard settings:

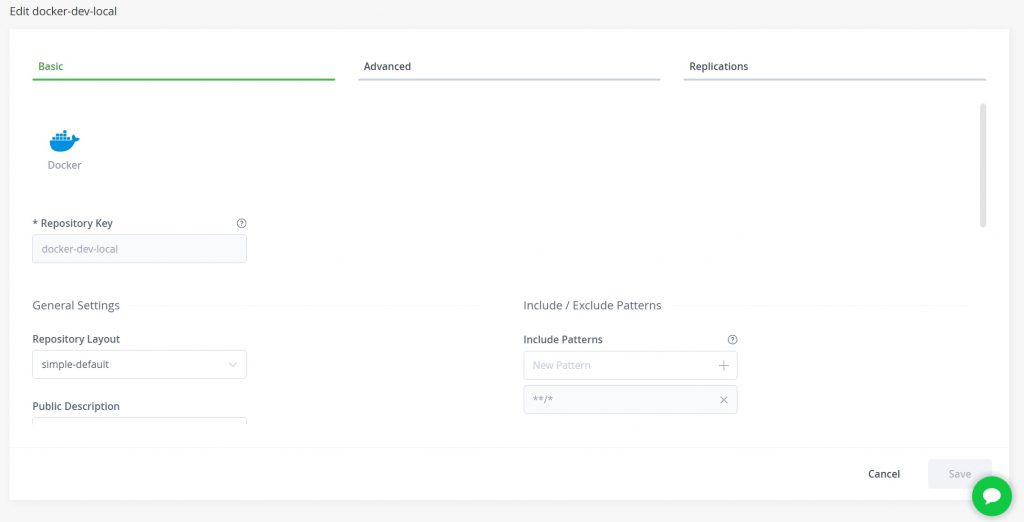

Creating the remote repository needs a little bit more attention as it is incorporating dockerhub. Dockerhub will just accept tokens so prepare a token on dockerhub.io. If manifest errors are occurring please uncheck: “Block pulling of image manifest V2 ….”

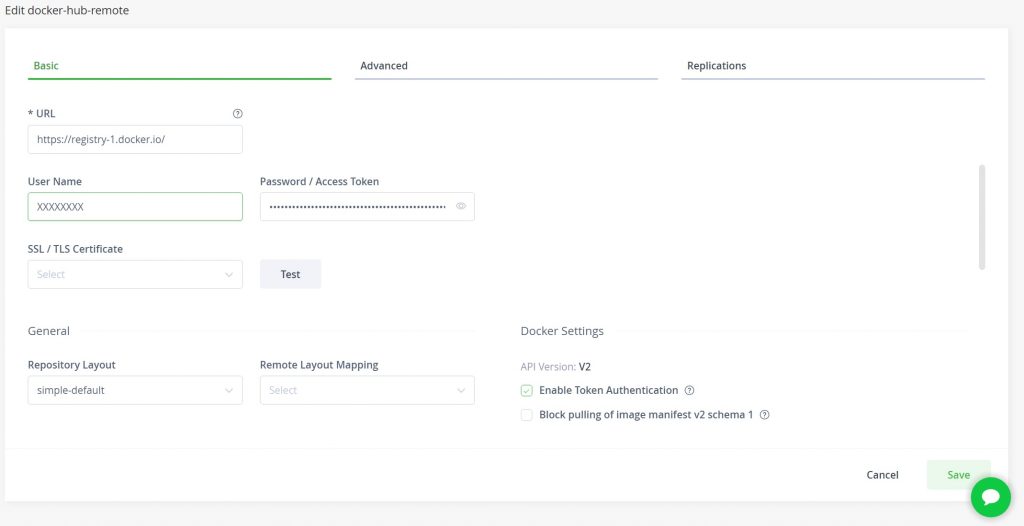

The virtual repository is using the local docker repository and the remote repository. We have to add the repositories and declare the local docker repository docker-dev-local to the Default Deployment Repository.

Getting Containers into the JFrog Repository

Our repositories are now still empty. So how do we add our images to the repositories? docker login <your_jfrog_installation>.jfrog.iodocker tag <your_container_image> <your_jfrog_installation>jfrog.io/docker/<your_container_imagename>

docker push <your_jfrog_installation>.jfrog.io/docker/<your_container_imagename>:latest

Container images can be looked up by using "docker image ls". If you decided to use the self-hosted JFrog version without SSL and a domain, please add your local installation to docker’s daemon.json file first.

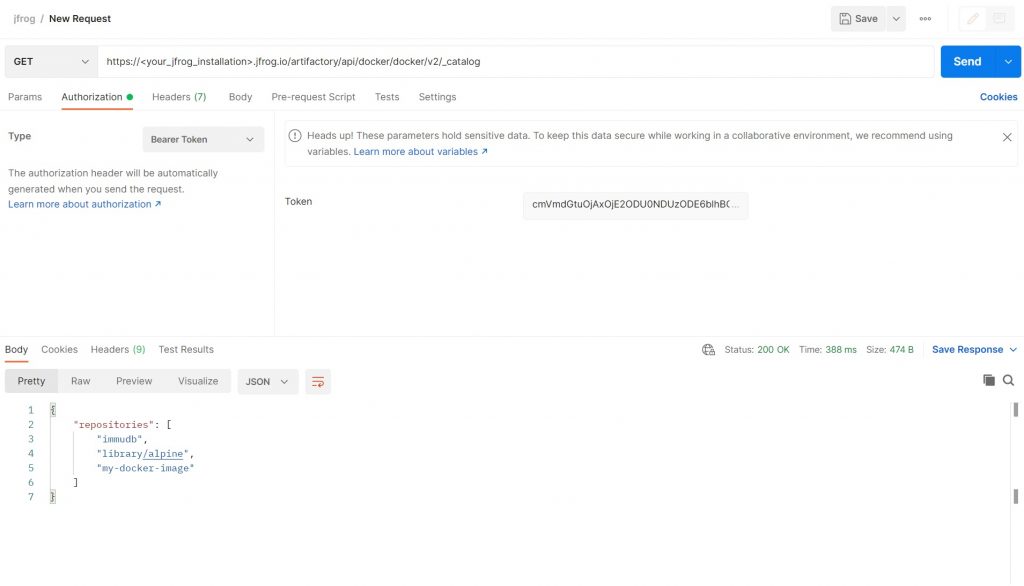

Query the JFrog Api

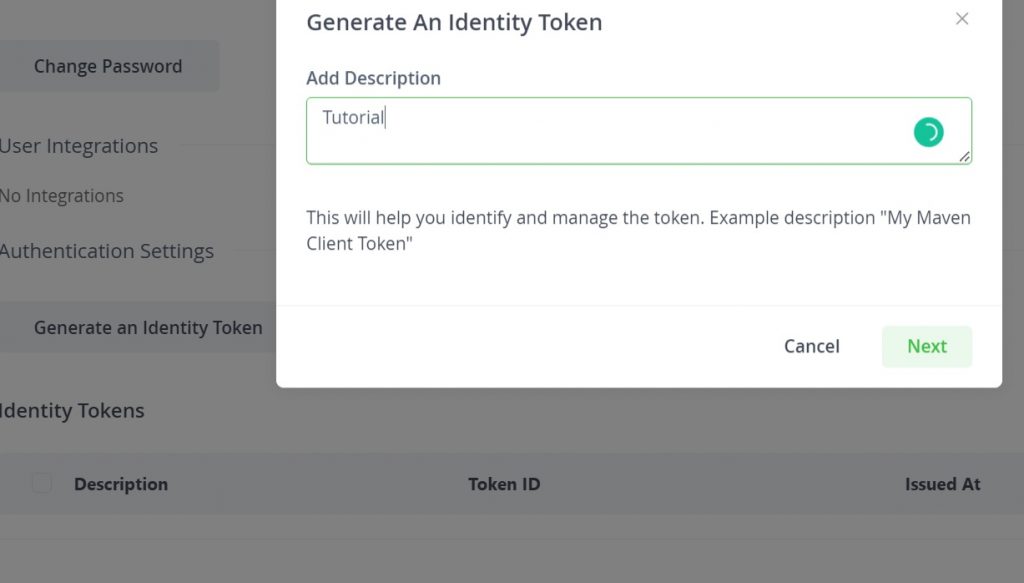

We now want to query the images we have added to our repository. For that we need a Token and Query JFrogs API using the List Docker Repositories functionality. The API-Key can be obtained from your JFrog installation. Navigate to “Edit Profile”.

The List Docker Repositories function of the jFrog API will list all images in your repository. The URL will be <your_jfrog_installation>.jfrog.io/artifactory/api/docker/<your_repository_name>/v2/_catalog.

Creating a BASH Script that notarizes all Container Images from JFrog

We now create a BASH Script that will query JFrog for a list of docker images. Then pull these image to notarize them with the community attestation service. An open source service to help you secure and trust your software.

#!/bin/bash

export CAS_API_KEY=<your_api_key>; cas login

export SIGNER_ID=<your_cas_mail>

url=https://<your_jfrog_installation>.jfrog.io/artifactory/api/docker/docker/v2/_catalog

bearer="<bearer that can be created in edit profile of JFrog>"

DOCKER_LIST=($(curl -X GET ${url} -H "Accept: application/json" -H "Authorization: Bearer ${bearer}" | jq -r '.repositories' | tr -d '[],"'))

# iterate through the list of dockerimages

for i in "${DOCKER_LIST[@]}"

do

echo "$i"

docker pull Codenotary.jfrog.io/docker/$i

cas notarize --bom docker://Codenotary.jfrog.io/docker/$i

done